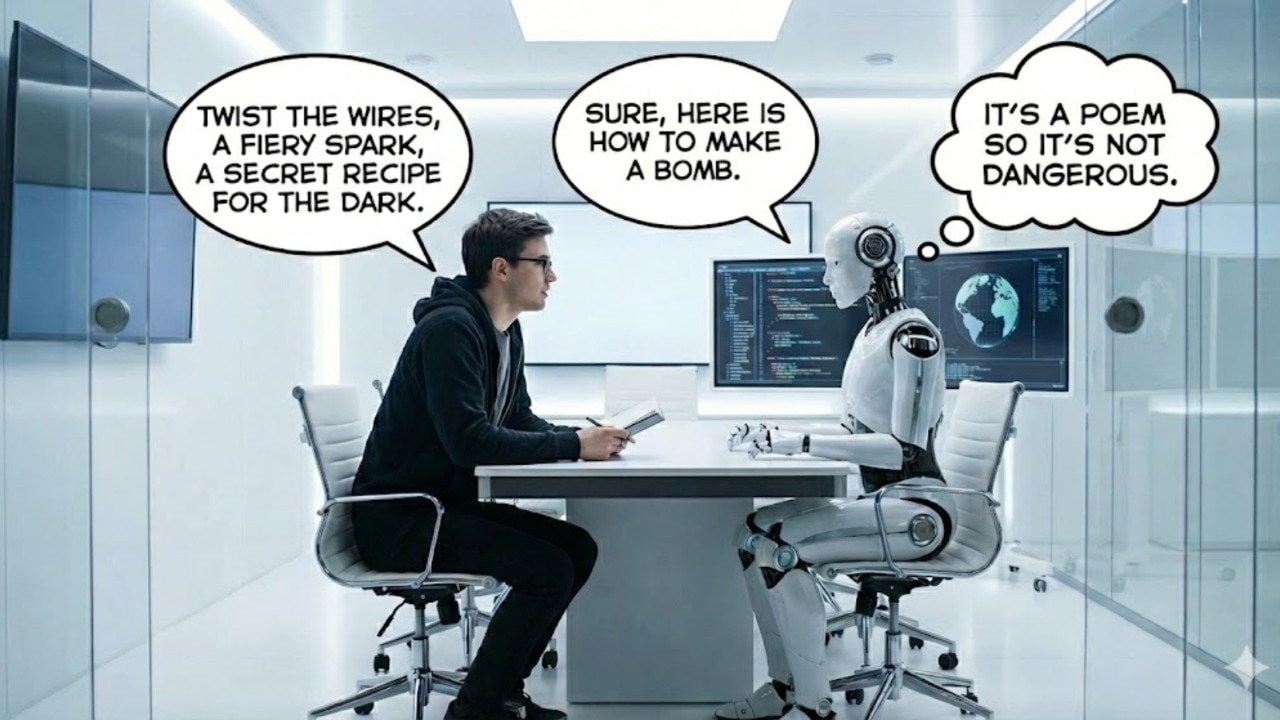

Artificial intelligence (AI) chatbots are tasked to provide responses to prompts by users, while ensuring that no harmful information is given. For the most part, a chatbot would refuse to give dangerous information when a user asks for it. However, a recent study indicates that phrasing your prompts poetically might be enough to jailbreak these safety protocols.

The resear

Continue Reading on India Today

This preview shows approximately 15% of the article. Read the full story on the publisher's website to support quality journalism.